Why Brands Prioritize Risk Over Reach in 2026

In 2026, reach is no longer scarce. Attention is.

That’s why narrative control is becoming increasingly important.

Remember when a million impressions used to signal success? Today, they signal exposure. But if those million impressions are powered by a toxic trend, a misleading clip, or an AI-generated deepfake - reach stops being an asset and becomes a liability.

That’s the shift most teams are still catching up to: the brand narrative is no longer something you publish and manage from the top down within your brand’s official social media channels. It’s something your audience creates in public, then remixes, reacts to, and distributes at scale.

This shift is forcing brand managers and communication leads to rethink their social listening strategies. Traditional KPIs that used to focus on growth and engagement are out, making room for a new metric: narrative resilience.

The question is no longer “How many people saw us?” but “What did they see, and how did it frame us?”

In a video-first ecosystem, reputation risk scales with reach. That’s why many believe that in 2026, brands will pay more attention to risk than reach, prioritizing brand equity and narrative resilience over chasing views.

The Control Paradox: Shaping the Uncontrollable

Brands can’t really control how audiences talk about them or use their content. But they can influence how their brand appears within visual culture. Today, a brand logo might appear in a viral parody or an AI-generated video that reframes the brand’s identity overnight. This new ‘unowned narrative’ is a space where brand meaning is shaped by millions of viewers outside corporate control.

The paradox is clear: you can’t control the audience and you shouldn’t even try. Marketing executives actively seek user-generated content, viral videos, and authentic creators who start conversations that no campaign could script. But on the other hand, they can’t allow the crowd to turn a carefully curated content strategy into chaotic, contextless content that chips away at brand credibility.

So what should you do? You have to shape the visual sentiment surrounding your brand. It can’t be done with legacy social listening tools, because they only analyze text and can’t reflect real-time sentiment as they are presented in videos. In order to fully understand not just what people say, but how they show your product across short-form video platforms - you need a video-first social listening tool.

As Ofer Familier, Co-founder & CEO of Dig, explains:

“Social video builds and breaks reputations faster than any other medium. Our mission is to give brands immediate, precise visibility into those narratives.”

For brand managers, this new category of in-video social listening translates into a new defensive strategy of protecting equity in a world where visual perception spreads faster than official messaging. Reputation is no longer in the hands of the brand. It’s seen, interpreted, and amplified by online creators in real time.

Why Metadata Monitoring is a 2026 Liability

Most social media monitoring tools still rely on metadata such as captions, hashtags, and keywords. But discovery in 2026 is driven by video content and the text often has very little to do with it. Viral trends rarely start with a hashtag. They start with a visual pattern , like a gesture, a format, or a framing style that signals membership in a cultural moment. By the time a keyword shows up in a report, the narrative has already scaled. Crises don’t wait for hashtags to announce themselves, They hit the feed first.

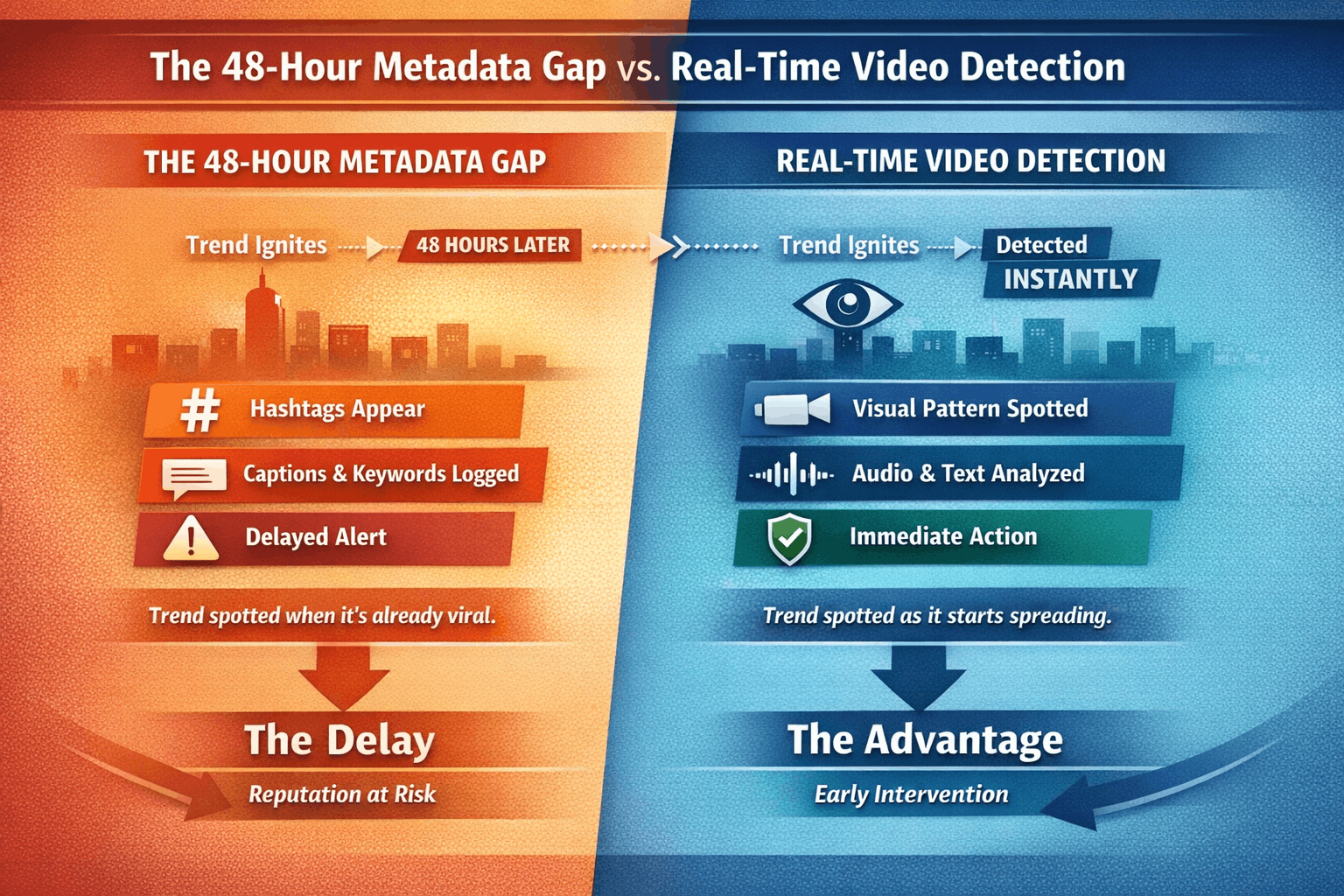

This creates what can be called the “metadata gap”, the lag between when a visual trend begins and when traditional monitoring systems register it as relevant. That lag is where warning signs get missed and where reputational risk can escalate into lasting damage before you even get a chance to respond.

Real-time social listening needs to move beyond text and into visual analysis. That’s exactly where in-video social listening comes in. Instead of asking “Is the brand mentioned?”, in-video social intelligence programs ask “Is the brand being shown, and in what context?”. We said it before and we can’t stress this enough: in a video-native environment, perception is formed through imagery long before it’s formalized through language.

What You Will Learn

- The CPM Myth: Why “Cost Per Mille” is being replaced by “Cost of Crisis Averted”.

- Multimodal Intelligence: How to move from tracking keywords, to decoding signals across video, text, images, audio, and comments. .

- Narrative Stewardship: Practical ways to influence the unowned narrative around your brand before it shapes you

- Tooling for 2026: Why social media monitoring requires video-native monitoring, not keyword dashboards. tools must become video-native to protect reputation.

The Strategic Pivot: Video Intelligence

The Algorithm Doesn’t Read, It Watches

Platforms no longer prioritize text signals alone. They prioritize visual hooks like faces, gestures, objects, and aesthetic cues that capture attention instantly. If your monitoring platform only tracks text mentions, you are missing where narratives actually form in 2026: inside the video. For a Social Media Insights Manager, that means the earliest indicators of narrative risk show up before the words do, through what people see, mimic, and remix. This isn’t a blind spot - it’s a blind fold.

Decoding “Aesthetic Sentiment”

Sentiment isn’t just positive or negative text. It’s aesthetic. If your product is shown in a premium, aspirational context - oh happy day! But what if it’s associated with a low-quality viral trend that subtly cheapens brand positioning? These visual associations shape perception more powerfully than written reviews, and you can find yourself with a brand whose reputation has been chipped and cracked.

Aligning visual data with brand positioning allows you and your team to detect when aesthetic sentiment begins drifting away from intended brand values long before traditional sentiment analysis flags this as an issue.

The Rise of Synthetic Risk

AI-generated content is expanding the risk surface for every brand. Deepfakes, synthetic voiceovers, and AI parodies can spread rapidly while appearing authentic to viewers. AI-powered, in-video social listening enables early identification of these AI-driven risks by detecting anomalous visual patterns and unusual narrative spikes. This allows communications teams to intervene before manipulated content defines the public perception of the brand.

Proactive Reputation Management

In a video-first environment, reputation shifts often begin with micro-behaviors: a recurring gesture, a framing style, or a meme format that undermines brand values and reframes how audiences perceive a product. By tracking these visual micro-signals, brands can anticipate narrative shifts before they become mainstream conversations. Proactive reputation management means moving from traditional sentiment forecasting via text to identifying early visual cues that can signal a shift in perception.

Bridging the Insights Gap

The final evolution of in-video social intelligence is its ability to feed insights back into product and R&D decisions. Visual trends reveal how consumers and potential clients actually use, display, and interpret products in real-world contexts. This closes the gap between social listening and innovation, turning narrative signals into much needed actionable input for product development.

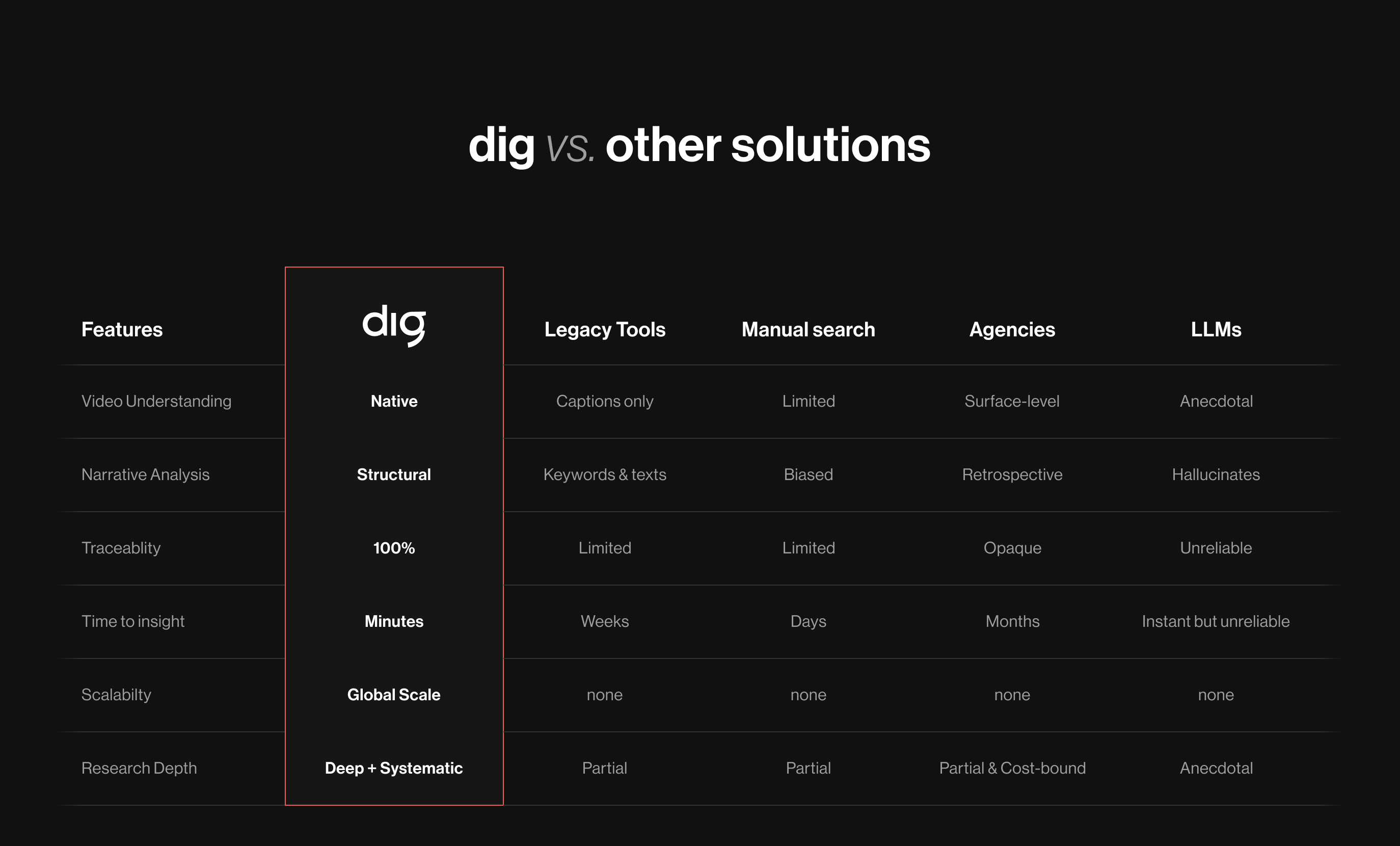

Visual Intelligence vs. Keyword Tracking

Traditional social listening tools were built for a text-first internet. However, in a video-native ecosystem, risk rarely begins as a keyword. It usually starts with a meme format, a visual mention, or a contextual edit that reshapes perception before anyone names it. This is where legacy tools struggle, while in-video, AI-powered social listening platforms like dig excel: traditional monitoring systems track conversations, whereas visual intelligence decodes narratives in real time.

Why Text-Based Tools Leave You Blind

Keywords are lagging indicators. By the time a phrase becomes a hashtag, the narrative has already spread through video reactions, remixes, and visual cues that text-only systems can’t detect. Relying solely on legacy social media listening tools or traditional media monitoring software means discovering risk only after it has scaled - and by then, the damage is already done. Effective sentiment tracking on social media now requires multimodal analysis: decoding what’s being said, what’s being shown, and how it’s framed.

Real-Time Visual Guardrails

AI-powered social listening acts as an early warning system for visual platforms. Instead of waiting for explicit mentions, real-time, in-video social listening can surface unusual visual patterns, sentiment shifts, and narrative spikes as they emerge.This enables communications teams to intervene while perception is still forming, rather than reacting once a narrative becomes dominant - shifting brands from retrospective reporting to proactive risk detection.

Building a Narrative Toolkit

Protecting reputation and building narrative resilience needs more than just risk monitoring; it requires guiding how creators visually represent your brand. By providing clear visual assets, contextual cues, and creative frameworks - much like a visual extension of the brand book - brands can influence how user-generated content evolves without restricting authenticity. This approach combines defined response strategies with proactive narrative stewardship, ensuring that when audiences create, remix, and share - they do so within a context that reinforces brand credibility.

2026 Technology Audit: Dig vs. Legacy Tools

Key Takeaways

- Reach is cheap; reputational risk is expensive.

- Hashtags are lagging signals; visual context reveals risk in real-time.

- In-video social intelligence is essential to detect narrative shifts before they scale.

- Visual sentiment tracking enables proactive reputation protection instead of reactive crisis response.

- In a video-first ecosystem, in-video social listening is the only way to safeguard your brand.

The New ROI: Narrative Resilience

Narrative resilience is becoming the defining ROI for 2026, since brand meaning is increasingly shaped outside the brand’s official channels. When audiences react, remix, or contextualize content through video, they are not just amplifying reach - they are actively reframing perception. Measuring success now requires monitoring not only comments and mentions, but also visual usage patterns, aesthetic sentiment, and emerging narrative cues through advanced in-video social listening programs and real-time social intelligence capabilities.

This shift reframes reputation management from reactive crisis control to proactive narrative stewardship. By using AI-powered, in-video social listening for sentiment analysis, brands can monitor how online creators engage with their content, identify perception shifts early, and intervene before harmful narratives scale. That’s why in 2026, the true return on investment isn’t reach - it’s risk aversion, helping brands safeguard the durability and integrity of the brand story across fast-moving, video-first social media platforms.

Ready to get a grip on social video?

Frequently Asked Questions About Attention to Risk Over Reach (FAQ)

What are the most important business predictions for 2026 regarding brand risk?

A primary prediction for 2026 is the total decoupling of "Reach" and "Trust". In the 2026 economy, reach has become a commodity easily inflated by synthetic AI traffic, making it a "vanity metric" that often masks underlying reputational threats. Brands are shifting to risk-centric KPIs because a single unmonitored viral video can cause the brand more equity damage than 10 million positive impressions can repair. By prioritizing Narrative Resilience, brand managers can ensure high visibility doesn't lead to "Contextual Poisoning" or algorithmic shadowbanning.

What is "Visual Sentiment" and why is it essential for 2026 trends?

Visual Sentiment is an advanced form of social listening sentiment analysis that analyzes the content within video frames rather than just text. It uses computer vision to evaluate the setting, lighting, and emotional cues of a creator. This allows AI-powered in-video social listening platforms such as dig to detect if a brand is being portrayed in a premium, aspirational context or if its reputation is being compromised by "Aesthetic Risk" or "De-influencing" trends, which are predicted to be among the most important ideas for 2026 marketing.

How do social media monitoring tools handle narratives without hashtags in 2026?

Modern social media monitoring tools have moved toward Multimodal AI and Interest Graph analysis because 2026 discovery algorithms prioritize "Visual Hooks" over searchable tags. To manage narratives, brands must use real-time in-video social intelligence that "watches" the content. This involves automated object recognition and speech-to-text processing to identify brand presence inside video, allowing Communications Leads to catch a narrative shift hours, sometimes days before it ever trends as a text-based keyword.

What will change your life in 2026 when it comes to brand management?

The technology that will redefine brand management in 2026 is AI-Powered Video Intelligence. In a video-first world, this innovative tool provides a "Predictive Reputation Intelligence" layer that legacy tools cannot match. For enterprise organizations, the benefit lies in "Narrative Stewardship", that is the ability to detect "Synthetic Signals" (AI-generated content) that precede a mass-market shift in consumer perception. This enables Social Media Insights Managers to provide product and development teams with raw data on how a product is actually being used, turning risk monitoring into a strategic engine for growth.

Related stories