The problem with traditional tools

They can't see inside the video

Legacy tools are limited. They weren’t built for video. They can’t interpret tone, reaction, or sentiment where meaning and intent are shaped.

They miss out on culture shifts

Trends go viral through reactions, stitches, and narratives. Text-based tools miss context and emotional direction.

They induce manual work

When tools fail, teams revert to spreadsheets and screenshots. Research becomes slow, expensive, and outdated before it reaches decision-makers.

That’s why we created dig.

to help you decode what happens on social and turn it into explainable, traceable insights in real time.

dig vs. other solutions

Features

Legacy Tools

Manual search

Agencies

LLMs

Video understanding

Native

Captions only

Limited

Surface-level

Anecdotal

Unmatched coverage

90%+

Limited

Limited

Limited

Only indexed pages

Narrative analysis

Structural

Keywords & texts

Biased

Retrospective

Hallucinates

Traceablity

100%

Limited

Limited

Opaque

Unreliable

Time to insight

Minutes

Weeks

Days

Months

Instant but unreliable

Scalabilty

Global Scale

None

None

None

None

Research depth

Deep + Systematic

Partial

Partial

Partial & Cost-bound

Anecdotal

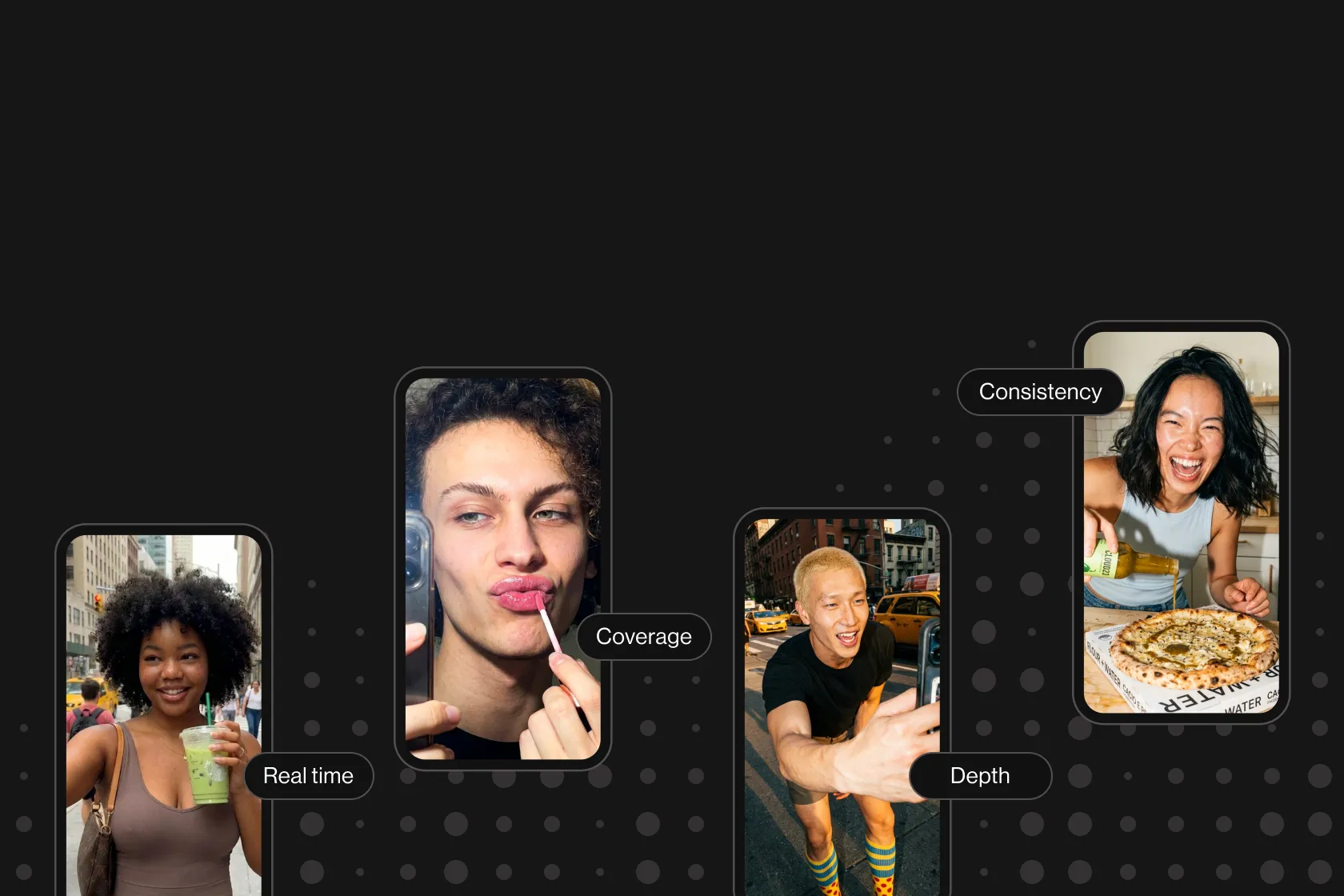

From guesswork to ground work

With 90%+ coverage, dig is the only way to get real, defensible understanding of what’s happening inside social video. Use dig when your decisions depend on seeing the full picture.

Got questions?

We’ve got answers.

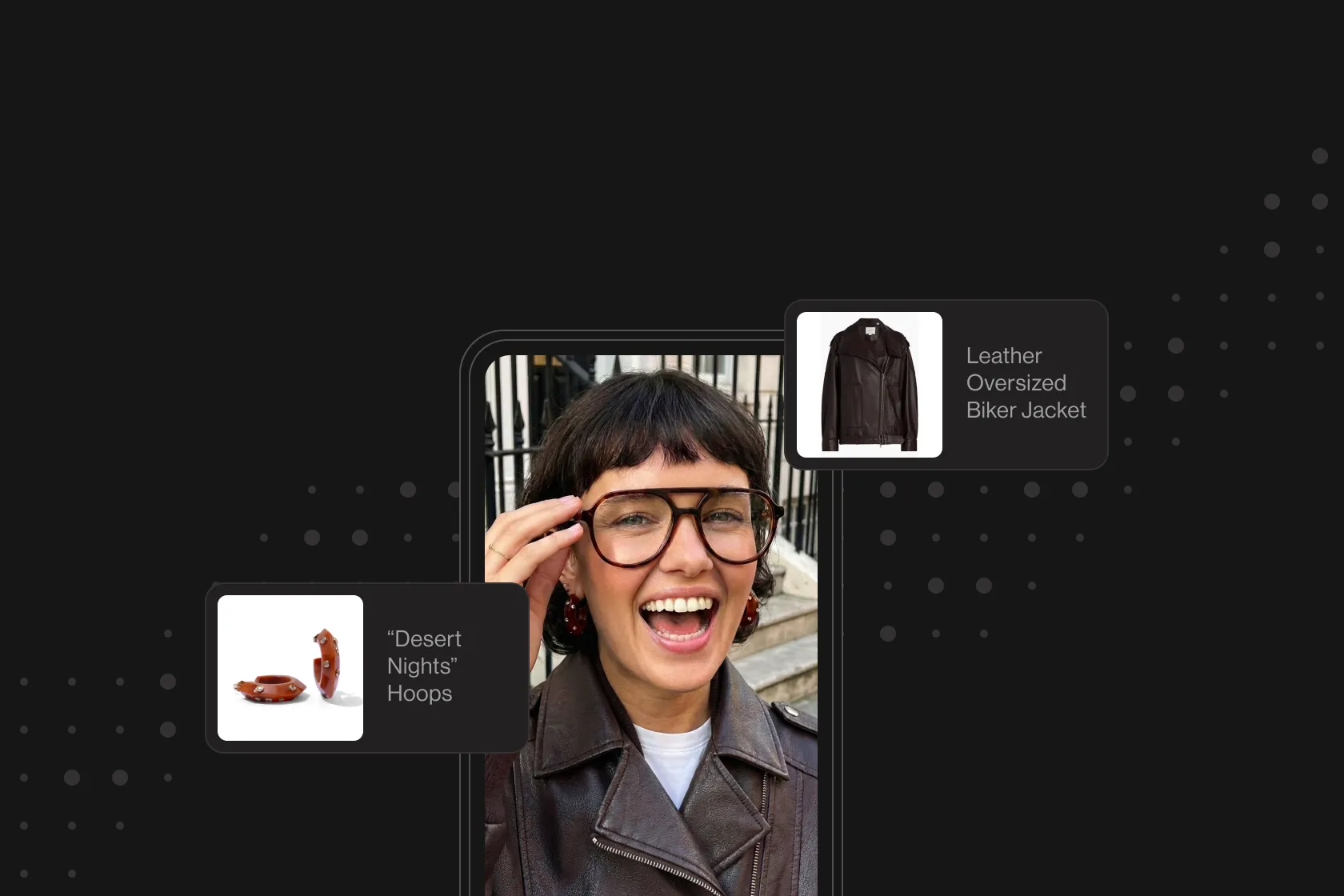

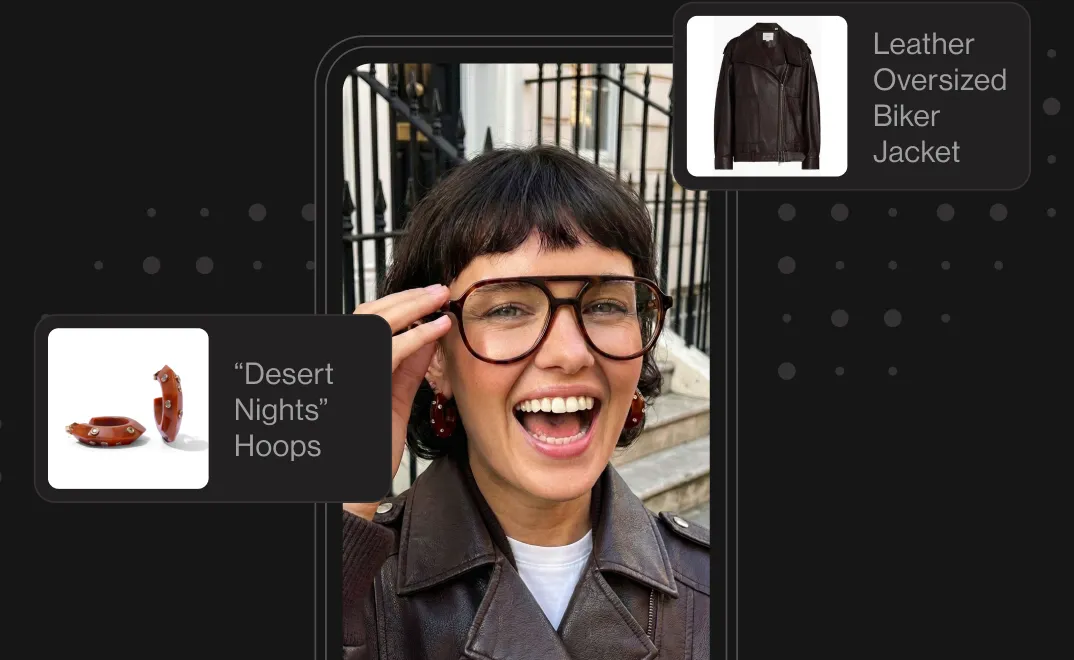

In one word: video.

Traditional social listening tools analyze text around the video: descriptions, captions, comments, hashtags.

dig analyzes what’s inside the video: visuals, speech, tone, scenes, objects, emotions, and narrative structure.

With dig, you can tell whether something is sarcasm or praise, whether the product is actually shown on screen or merely mentioned in text, and whether sentiment is positive or negative inside the video itself.

dig’s in-video analysis runs at 95% accuracy across speech, visuals, emotion, and narrative classification.

That accuracy is measured against labeled datasets and continuously improved with human-in-the-loop evaluation.

Every insight is traceable to the original video, so teams can verify exactly what the digger (the dig engine) detected.

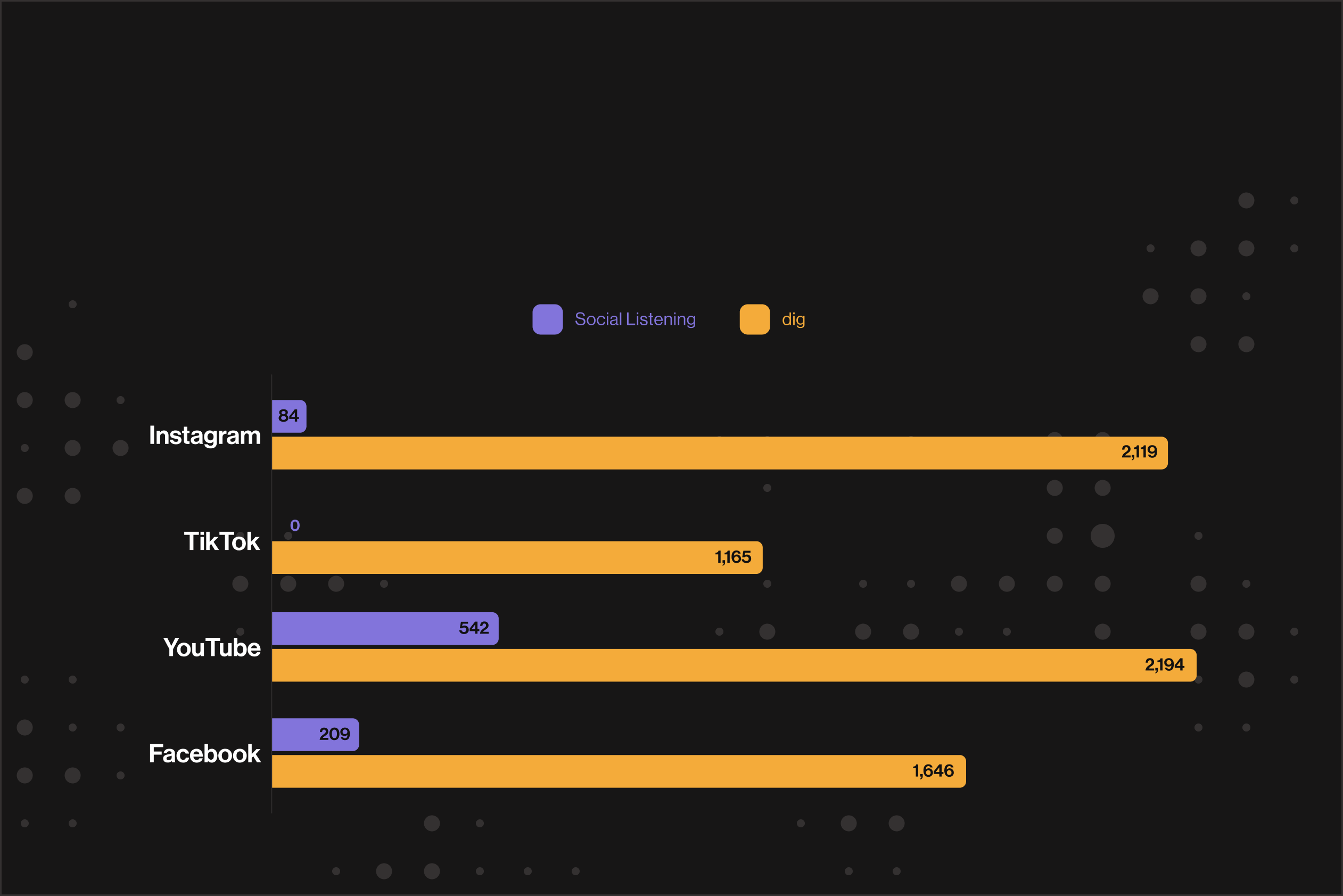

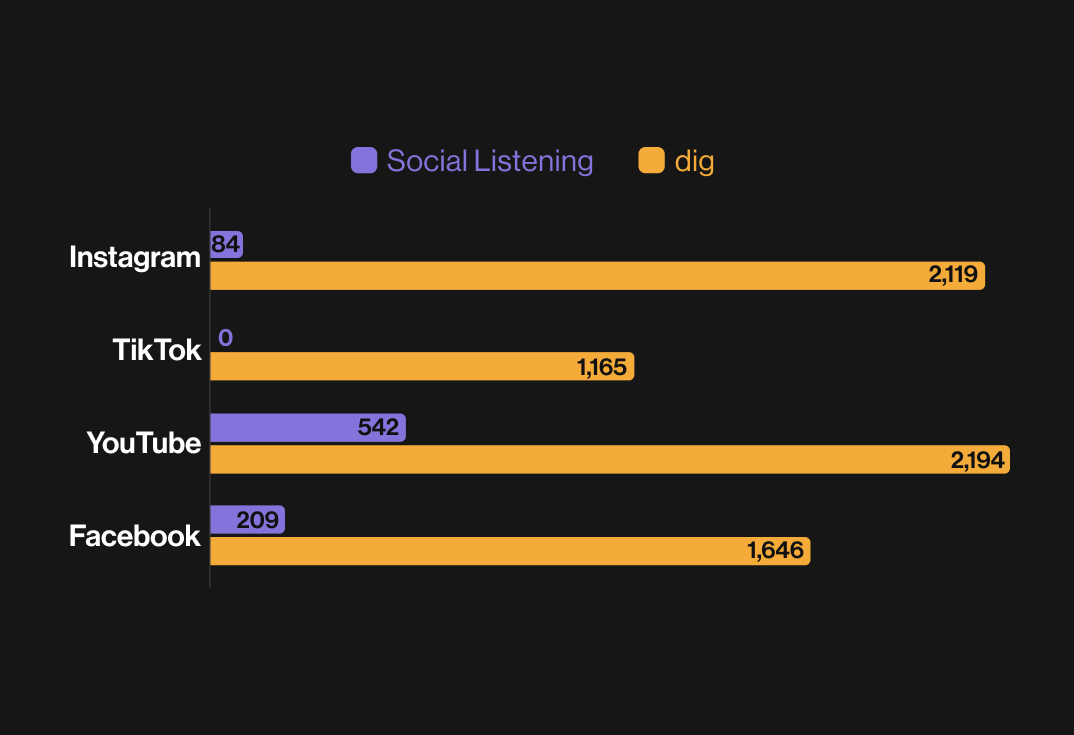

dig analyzes 90%+ of social video across major platforms, including TikTok, YouTube, Instagram, Reddit, X, and Facebook.

This is not sampling. It’s continuous, large-scale in-depth analysis of billions of posts in real time.

LLMs summarize language, but they can’t do the same for video content. They don’t see visuals and can’t catch gestures, edits, or on-screen context

They also can’t interpret irony, reaction formats, or visual storytelling - and they sometimes hallucinate patterns without access to underlying video data.

dig is not a text model guessing about video.

It analyzes the video itself and produces insights grounded in actual footage: clickable, reviewable, and defensible - so you can make high-stakes decisions.

The short answer is MINUTES.

Once you start, you can search in natural language, explore live narratives and run deep research flows in the dig chatbot and get your answers in minutes.

Most teams are up and running in under three (3) hours. No Boolean query setup, no taxonomy building and no manual tagging needed.

- Brand & consumer insights teams - for culture, perception, creative direction, and trend analysis.

- CMOs and strategy leaders - for positioning, risk, and studying the market.

- Comms & PR teams - for crisis detection, reputation, misinformation, and narrative tracking.

- Agencies - for client pitch, influencer vetting, and full campaign analysis.

- Research and analytics teams - for qualitative market and consumer research at scale, without manual coding.

- Public sector and institutional teams - for public sentiment tracking, disinformation, threat detection, policy narratives, and trust monitoring across social platforms.

This isn’t faster research. It is social intelligence.

Manual video research:

- Is slow (takes weeks to months)

- Covers a fraction of what’s actually happening

- Biased by what analysts chose to watch

- Can’t be reproduced or audited at scale

dig:

- Analyzes billions of videos in real time

- Surfaces patterns that can’t be tracked manually

- Produces traceable, source-linked insights

- Eliminates the human bottleneck

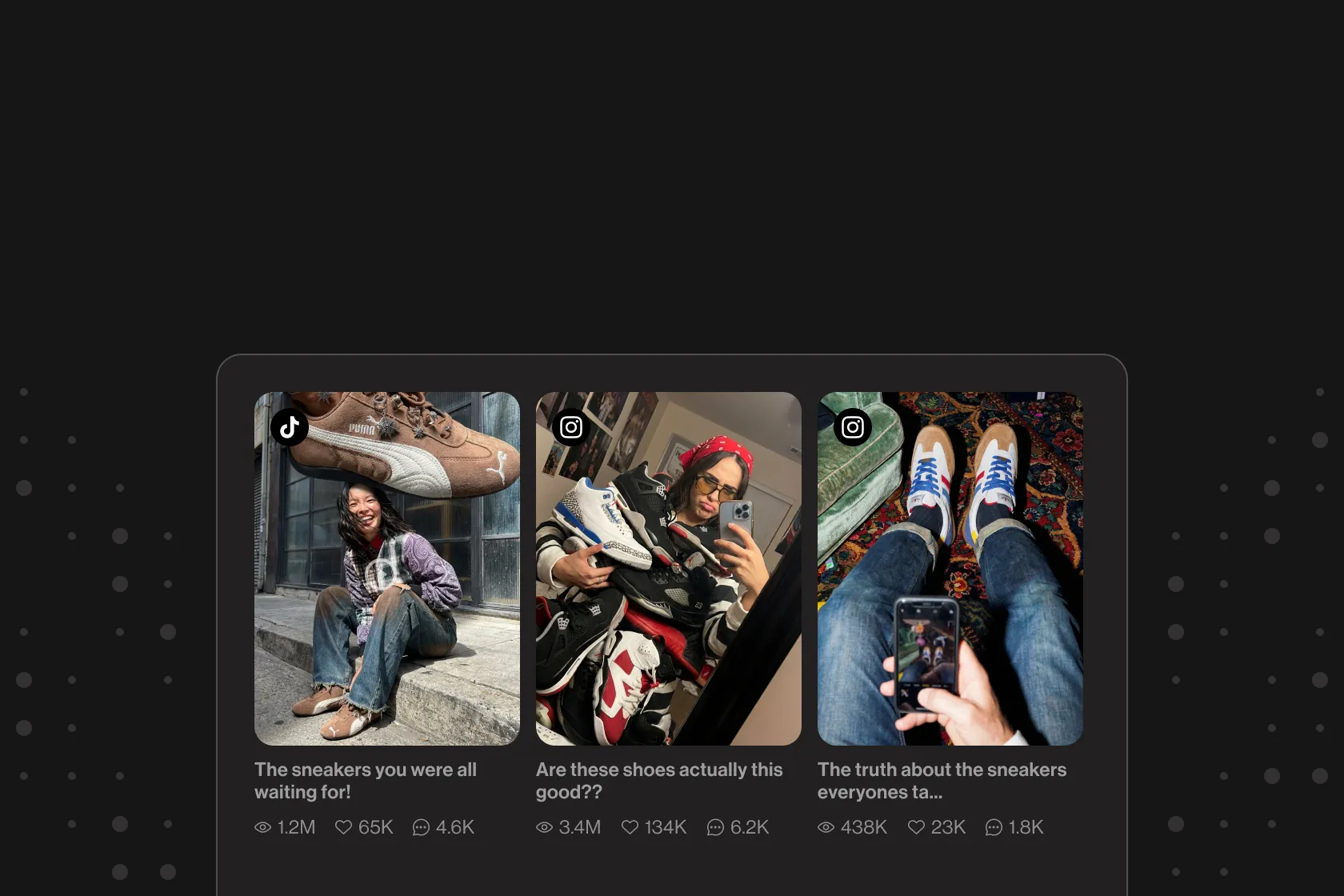

For any narrative, trend, or claim, you just click through to the exact posts behind it. You can see which creators drove it, understand how it spread and verify the context - in minutes.

dig's narrative intelligence detects:

- Deepfakes and manipulated content

- Counterfeit products and brand misuse

- Misinformation and harmful narratives

- Off-brand or risky creators / influencers

- Emerging negative issues before they scale

dig analyzes what is shown and said inside the video, not just the caption - and that’s why it can detect threats that text-based systems fail to catch.

Most teams understand what they’re missing when they research their own category, brand, or audience with dig.

In a demo, you’ll see for yourself:

- How narratives actually appear inside video

- How insights link directly to real social videos

- How quickly you can go from question → evidence → to decision

If your decisions depend on data - watching in-video intelligence form in real time is a game changer.