The Blind Spot in Brand Research: Why Measuring "What’s Easy" Is Costing You Market Share

Introduction: The Data Illusion in Modern Branding

Anyone who has played hide-and-seek with a toddler has seen it happen: they cover their eyes and giggle, convinced they’ve vanished. From their perspective, the logic is simple - if they can’t see you, you can’t see them. It’s a charming misunderstanding when it comes to children - not so much when it comes to your Brand.

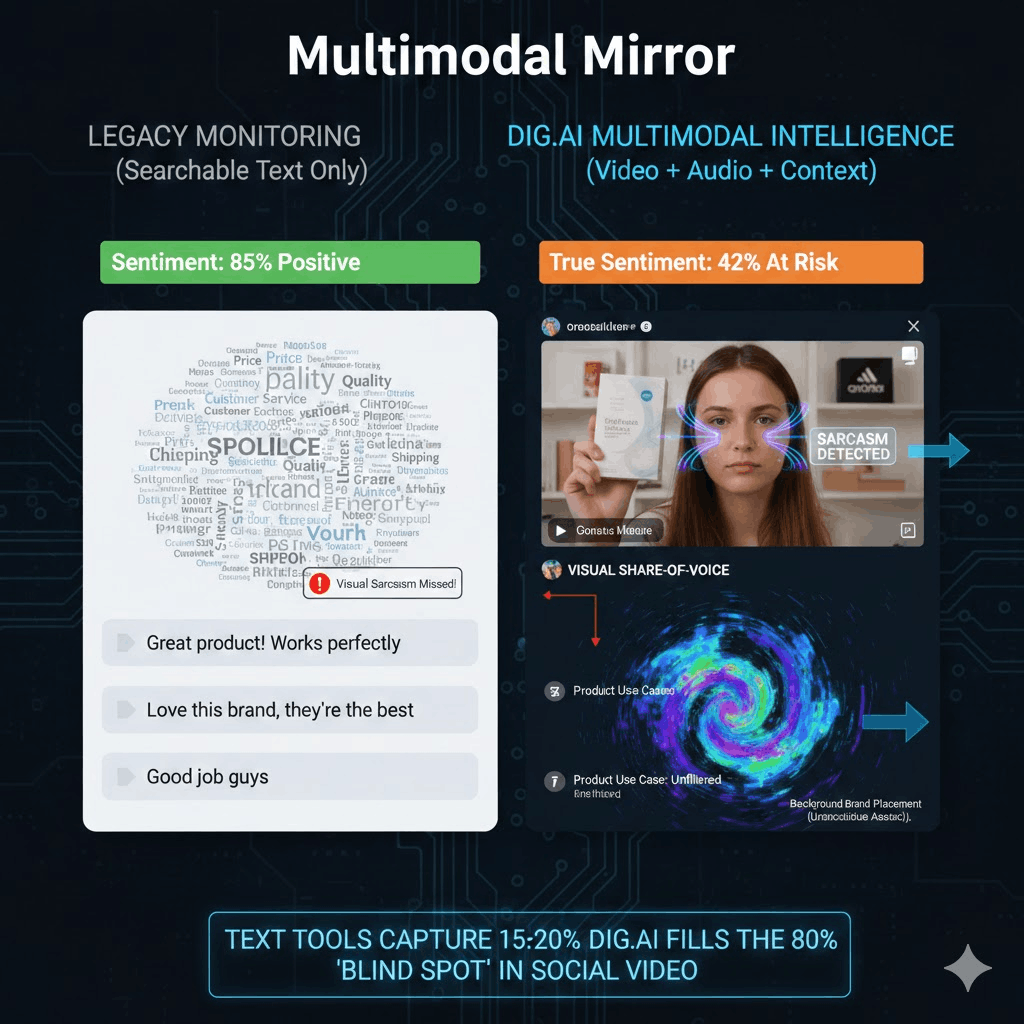

And yet, in 2026, many Brand Research teams and Consumer Insights Managers are operating with the same logic. If a signal can’t be searched in a keyword database or surfaced in a text-based monitoring dashboard, it’s treated as if it doesn’t exist. But audiences are no longer shaping brand perception primarily through text; they’re doing it through video reactions, visual references, and contextual edits that never register as searchable keywords or mentions. This is the ‘Data Illusion’ in modern branding.

Traditional social listening dashboards may look comprehensive, but they map only the conversations that are easiest to capture. Meanwhile, the narratives that actually influence consumer behavior are forming in visual culture - unseen, untagged, and unmeasured by legacy platforms. Without video signal monitoring and in-video analysis, Brand Managers aren’t seeing the full picture; they’re simply covering their eyes and hoping nothing is happening.

What You Will Learn

- Why "Text-Only" monitoring creates a false sense of security.

- How legacy tools fail to capture sentiment in TikTok, Reels, and Shorts.

- The transition from reactive monitoring to proactive Stakeholder Intelligence.

- A framework for measuring engagement that correlates with market share.

The Crisis of Legacy Brand Tracking: Why 2026 Demands More Than Text

Legacy reputation monitoring tools were built for an era dominated by blogs, forums, and searchable text. In 2026, that assumption creates a dangerous Text-Only Trap. Nearly 90% of digital discourse (such as TikTok, Reels, Shorts etc.) now unfolds inside video frames through tone, visuals, and context that keywords won’t capture. A creator might praise your product verbally while subtly framing it next to a competitor, or use sarcasm that text-based sentiment analysis misreads as positive feedback. The result: dashboards that show brand approval while visual perception is quietly eroded and brand equity takes a hit.

The same blind spot appears in how engagement is measured. Traditional tools measure content engagement via likes, shares, and comments, and treat them as signals of success, but these vanity metrics often reflect attention rather than intent. High engagement doesn't equate to brand health if the context is a visual misunderstanding your tools can’t see. To lead in 2026, brands must move beyond measuring what is convenient and start decoding multimodal reality, where reputation is shaped much more by what audiences see than by what is written.

The Evolution of Stakeholder Intelligence: Decoding the Visual Narrative

The Video Signal: How Social Video Dictates Brand Sentiment (and Why You're Missing It)

Social video now shapes your brand’s “vibe” regardless of what your official messaging says. Video contains layers of metadata that text can’t replicate: micro-expressions, background audio trends, and visual context. Every frame carries signals, be it facial reactions, tone, visual pairings, and cultural references that subtly influence how audiences interpret your brand.

By overlooking these cues, you’re overlooking how perception is being formed and shared, and therefore missing the entire sentiment trajectory. The real question is not whether your brand is being mentioned, but whether it is being shown in ways that reinforce or quietly distort the original messaging.

Moving from Surface-Level Vanity Metrics to Deep Narrative Analysis

Stakeholder intelligence can no longer rely on counting views, likes, or shares alone. Deep Narrative Analysis (Abbreviated ‘DNA’ - Coincidence? We think not) examines whether high-performing content strengthens your brand’s positioning or introduces an unintended storyline that audiences begin to adopt as truth.

dig.ai decodes the visual narrative and analyzes visual and audible nuances to reveal the ‘why’ behind the engagement. It transforms raw numbers into predictive intelligence that anticipates shifts in brand equity. This shift turns performance data into strategic foresight, prompting teams to ask whether attention is building brand equity - or quite the opposite.

Closing the Visibility Gap: How dig.ai Quantifies the Unseen

Legacy social listening tools keep brand research fundamentally retrospective, reconstructing perception only after conversations have already scaled. dig.ai operates differently, acting as real-time “sonar” for the visual ecosystem. Through multimodal AI, the platform translates raw video signals such as visual cues, audio tone, and narrative framing, into executive-level intelligence. Instead of relying on manual reviews or fragmented reports, stakeholders receive automated narrative clustering that surfaces emerging blind spots while they are still forming, not after they have reshaped brand perception.

This approach reframes brand health from a static score into a dynamic, continuously updated narrative map. It captures how logos appear across subcultures, how products are visually framed in commentary videos, and how unspoken trends evolve within online communities. By contextualizing these signals into a single, cohesive view, dig.ai enables teams to quantify what was previously invisible: the real-time trajectory of brand sentiment across visual platforms.

Moving from text-only monitoring to video intelligence feels like shifting from 2D to 3D: suddenly, context, tone, and visual framing come into focus. But layering real-time analysis on top of that is like stepping into 4D: you’re not just seeing perception more clearly, you’re seeing it evolve as it happens. If your dashboard can’t surface video-native trends in real time, you’re still operating in two dimensions while your audience is shaping your reputation in four.

Conclusion: Adapting Your Brand Research for a Video-First Reality

The shift to video is not a passing trend; it is a structural evolution in how audiences interpret and shape brand perception. Organizations that continue to rely on legacy reputation monitoring tools risk analyzing only a fraction of the signals influencing their market position. In a video-first ecosystem, stakeholder intelligence must extend beyond text and metadata to include the context, tone, and visual narratives that now define brand meaning.

Closing the visibility gap is no longer optional - it’s mandatory. It is becoming the prerequisite for maintaining relevance and market share. By upgrading to video-first, multimodal analysis, brands can move from blind fold - to 4D; from partial analysis and retrospective reporting - to real-time narrative stewardship, ensuring they are actively shaping perception rather than reacting after it has already formed.

Key Takeaways: The Future of Brand Intelligence

- The Text-Only Era Is Over: If you can’t see video signals, you’re measuring only a fraction of brand perception.

- From Vanity Metrics to Narrative Intelligence: Likes and views signal attention, while visual context reveals intent.

- From 2D to 4D Brand Research: Moving from text analysis to real-time video intelligence transforms how reputation is shaped.

- The dig Difference: Multimodal AI is the new standard, and quantifying visual blind spots is now essential for proactive stakeholder intelligence in 2026.

FAQs

Why is text-based sentiment analysis no longer enough for brand tracking?

In 2026, the majority of consumer sentiment is expressed through social video rather than text alone. Text-only sentiment analysis captures captions and comments at face value, but it misses how meaning is conveyed visually - through tone of voice, facial reactions, editing styles, and contextual cues. A creator might say “Best launch ever!” while rolling their eyes, or showcase your product next to a competitor in a way that immediately reframes perception. In these cases, traditional tools record a positive signal, while multimodal analysis reveals emerging reputation risk and shifts in brand sentiment trajectory.

How does dig.ai measure sentiment in video without manual tagging?

Dig utilizes Multimodal AI Agents that process three data streams simultaneously:

- Visual Intelligence: Scanning for logos, product placement, and facial emotion triggers (Emotion Analytics).

- Acoustic Analysis: Decoding tone, pitch, and "vibe" to detect irony or sincerity in the spoken word.

- Semantic Synthesis: Transcribing speech into text and correlating it with visual and audio cues to ensure the final sentiment score isn't just a guess, but a highly accurate reflection of intent. This allows for real-time monitoring of un-tagged content where your brand is seen but not necessarily mentioned in the caption.

What is the difference between Monitoring and Stakeholder Intelligence?

While both use data, their utility for a Brand Manager is fundamentally different:

Monitoring is Reactive: It functions like a rearview mirror, telling you what was said and how many people clicked "like." It is useful for post-campaign reporting but does little to prevent a crisis.

Stakeholder Intelligence is Predictive: It uses historical narrative patterns and real-time video signals to forecast how different audience segments (stakeholders) will react to future moves. By analyzing the relationship between emerging visual trends and audience sentiment, it identifies "narrative friction" early, allowing you to pivot before a minor video trend turns into a text-based PR crisis.

Related stories